The Modern Data Engineer Part 2: Cargo vs Code

The biggest blocker: treating ingested data as software state instead of cargo moved by ingestion pipelines

The Modern Data Engineer Part 2: Cargo vs Code

In part 1, I argued that understanding data is the core skill and designing for change is a must.

The latter brings me to part 2, the biggest transition problem I keep seeing when software engineers move into data engineering:

They treat the incoming data as an integral part of the software, instead of treating it as cargo that ingestion software is moving.

That one mental model error creates most of the pain.

A software engineer is used to owning their system end-to-end. They control the input, they control the logic, they control the output. If something breaks, it is their code or their assumptions. This works beautifully for application development.

But data is not application state. It is external reality flowing through your pipelines. You can negotiate contracts, version APIs, build adapters. But you cannot prevent a source system from changing underneath you. You cannot stop a vendor from adding a field, a date format from shifting, or data arriving late. You do not control the evolution of upstream systems.

The moment you embed the assumption that incoming data will always match your expectations, your engineering breaks. Not because your code is bad, but because you are engineering against something you do not control.

1. The Core Mistake

- Ingestion pipelines are software systems.

- Source data is external cargo.

- Cargo is messy, late, inconsistent, and outside your code ownership.

When engineers make payload shape part of their software contract, they couple themselves to something they do not control.

2. How the Coupling Happens in Practice

It starts innocently. A software engineer builds a clean ingestion pipeline. They write it well, with clear structure and good error handling. Then they write tests, like any responsible engineer would.

Here is where the anti-pattern takes hold:

Tests are performed against the actual payload shape from a production source system. Assertions target the presence of specific fields. Nested JSON structures are validated directly in the pipeline logic. Real data is snapshotted and expectations are hardcoded around it.

This feels right. You are testing reality. But you are actually coupling your transport layer to external chaos.

Now a source system adds an optional field. Your test fails because the payload shape changed. Now you have a choice: update the pipeline to handle both shapes, or update the test to ignore the new field. Either way, you just tightened the coupling.

Six months later, a vendor deprecates an API endpoint and renames half their response fields. Your tests were written against the old shape. Your pipeline logic is hardcoded around those old field names. The ingestion fails. Not because your code is wrong, but because you embedded external reality into your engineering assumptions.

The real problem: you wrote unit tests that validate cargo, not the vehicle.

You should have tested that your pipeline handles payload shape changes. That it routes to the right place. That it retries on failure. That it is idempotent. These are software concerns. Instead, you tested that “user_id” exists and is an integer. That is a data concern, and it will change.

3. The Databricks CI Smell

When you couple your software tests to external data, you hit a hard wall: local testing becomes impossible.

Your laptop does not have access to production-like data. Neither does your CI runner. So teams solve this by running tests on Databricks clusters, or Snowflake, or whatever data platform is in use. They provision a cluster, spin up for three minutes, run the test, tear down.

Now your engineering feedback loop is bound to cluster startup time. A five-second unit test becomes a three-minute cell restart becomes a five-minute cluster boot becomes ten minutes for your feedback cycle.

This is not maturity. This is accidental architecture. This pattern takes hold: transport software gets coupled to the data platform. Now you cannot test without the platform. Now you cannot debug locally. Now a junior engineer cannot iterate fast because every change requires waiting for a platform interaction.

The worst part: this pattern becomes invisible inside data teams because platform-bound testing feels normal. Outside data engineering, teams solve this problem entirely differently. They test transport, not cargo. Their tests run in milliseconds locally. Their CI runners do not look like cloud bills.

This is your signal that something is wrong. When your engineering practice requires the platform to validate itself, you have confused concerns. The platform should validate whether your pipeline preserves data integrity. Your tests should validate whether your pipeline software works correctly in isolation.

4. Why This Holds Software Engineers Back

The software engineers who struggle most with data are often the ones with the strongest software discipline. They are used to owning their domain, writing tests that cover all branches and proving everything works together.

But data engineering is different. Applied here, that discipline becomes a liability. This is where things naturally drift: code quality gets optimized while data boundaries are missed. Beautiful wrapper classes get written around messy JSON when simpler parsers that fail fast and loud would work better. Weeks get spent “hardening” solutions against data volatility, when the real problem is testing the wrong thing.

The result: slow iteration, brittle tests, reduced trust in outputs. Solutions that work for one data source break when you add another. Difficult-to-debug code where you need the actual data to understand what the pipeline does.

This is not weakness. It is a gap. A highly disciplined software/data engineer can learn to separate concerns between code and cargo. It just requires seeing that the problem is different.

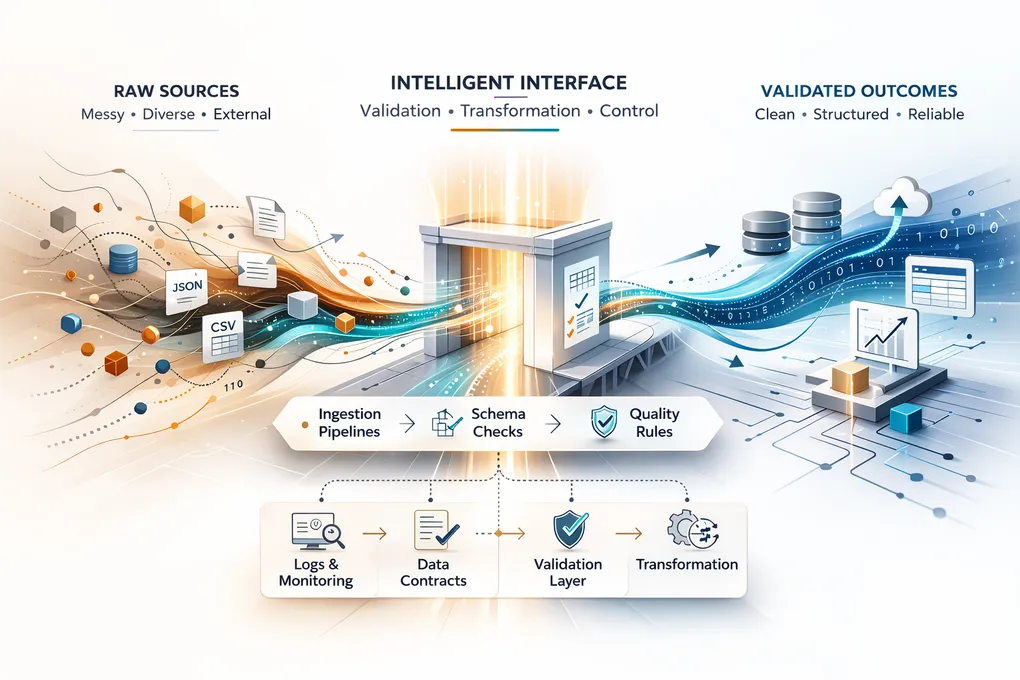

5. Better Pattern: Test the Transport, Guard the Cargo

Treat ingestion like logistics engineering. You are building a vehicle. The data is what you are moving. These are two different problems.

5.1 Test software behavior in isolation

Your pipeline logic does not need real data to be testable. It needs representative data. Synthetic data. Data that exercises all the paths your code can take.

Parse and map with synthetic test fixtures. Validate that your idempotence key works correctly on duplicates. Validate that your retry logic actually retries. Validate that your error handling produces logs that a human can debug. Validate that your normalization logic handles nulls, empty strings, unexpected types, and missing fields.

This is testing the vehicle. You are proving that the transport mechanism works correctly. Your tests run in milliseconds. They run locally. They do not require the data platform. A junior engineer can understand what they do and modify them without tribal knowledge.

5.2 Validate cargo with contracts and quality checks

Now, separately, validate that the cargo matches what you expect. This is not a software test. It is a data contract check.

Schema validation at ingestion boundaries. Does the incoming payload match the expected shape? If not, what do you do? Reject it, flag it, transform it? Your choice. But this is a data concern, not a software concern.

Semantic validation deeper in the platform. Freshness checks: is today’s data arriving on schedule? Completeness checks: are required fields populated? Distribution checks: are numeric fields in expected ranges? Business-rule monitoring: does the revenue total match what finance expects?

These checks live in the data platform. They validate the cargo after you have safely moved it. They run against real data. They change as business logic changes. They do not break your engineering tests when a source system gets upgraded.

5.3 Separate Concerns by Test Tier

Unit tests should validate that your pipeline function does what it claims: extract data from a source, transform it according to your logic, and load it to your platform.

Test the behavior: “Does my pipeline retry on transient failure?” “Does it route data to the correct destination?” “Does my error handler produce logs a human can debug?” “Does my load step write to the correct table?” “Is the pipeline idempotent when the same batch arrives twice?”

Please, do not test: “Does the source system send exactly these fields in exactly this format?” I cannot stress this enough. Schema validation is not a unit testing concern. It is an operational concern. It belongs in your data platform as a runtime contract check, not in your CI pipeline as an assertion. When you put schema validation in your unit tests, you are coupling your engineering feedback loop to external reality. When you put it in operations, you are monitoring the cargo independently of the vehicle.

Integration tests use controlled interfaces and representative samples. These prove that your pipeline orchestration works. That dependencies are wired correctly. That error paths are handled. These can be platform-local or run against a small staging instance, but they should not require production data volume or production cluster sizes.

Data quality checks validate production data reality and drift. These run continuously against real data in the production platform. They validate that your assumptions about the cargo remained true. They alert when ETL teams need to investigate or when downstream users need to understand what changed.

These are three different test strategies for three different purposes. Conflating them is where teams get stuck.

One objection I hear often: “But we use Avro schemas with a schema registry” or “We have versioned API contracts.” Good. You should. But even with schema registries and versioned APIs, the ownership boundary still exists. You control how you respond to schema change. You do not control when it happens. The contract helps you detect change earlier. It does not eliminate it. Your engineering still needs to handle the moment when the contract breaks.

6. The Punchline

Software engineers entering data engineering already have one huge advantage: they know how to build reliable systems. They understand testing. They understand separation of concerns. They understand that coupling is a liability.

But here’s what I keep seeing: software engineers who nail this boundary shift faster than anyone else.

Code is the vehicle. Data is the cargo. You engineer the vehicle. You validate the cargo. When you confuse the two, you couple your engineering system to external chaos. You make your tests depend on forces outside your control. You make your feedback loops slow. You make your code brittle.

When you separate them, something changes. Your pipeline logic becomes testable, repeatable, fast. Your data quality monitoring becomes continuous, not reactive. Your ingestion scales because adding a new source does not require rewriting old tests. Onboarding a new engineer becomes possible because they do not need to understand why the tests require Databricks.

This is not just a testing philosophy. This is the difference between a pipeline that works today and a system that works for years. This is the difference between moving data and engineering truth.

In part 1, I said the core skill is understanding data. That is true. But to apply that skill at scale, you need this: the discipline to separate what you control from what you do not, and to engineer only for what you control.

The cargo will change. The vehicle should stay strong.

Join the Discussion

Thought this was interesting? I'd love to hear your perspective on LinkedIn.