The Modern Data Engineer Part 4B: Operating the Silver Layer in Practice

Principles, governance, ownership, and an implementation blueprint for operating the Silver semantic layer in practice.

The Modern Data Engineer Part 4B: Operating the Silver Layer in Practice

Part 4A diagnosed why the Silver-Gold boundary fails and introduced Conformed Entities as the solution pattern. Designing that boundary is only half the work. This part covers how to operate it in practice: what the layer actually needs, why governance keeps failing at the same step, how to implement without disrupting what already runs, and the signal that tells you whether it is working.

1. What the Middle Layer Actually Needs

Most data platforms have a Bronze layer and a Gold layer. What they are missing is the governed semantic contract in between, and that gap is usually where platform trust collapses. When teams get this middle layer right, it changes how the entire platform operates: metric disputes shrink, analyst self-serve becomes real, and the dependency on a handful of people who hold context in their heads starts to dissolve. It is not a nice-to-have architectural refinement. It is the part of the platform that determines whether the rest of it actually delivers on its promise.

Building it well means being opinionated about what belongs here and practical about where to start. It settles high-reuse definitions first and resists the pull toward modeling every edge case upfront. Teams that go for completeness too early usually produce complexity before they produce value, and that is often mistaken for maturity.

Definitions must be materialized, not only documented. Shared logic should exist in reusable models that teams actually consume, not in wiki pages that diverge silently under delivery pressure.

If this layer is a contract, it needs enforcement. The most important thing to test is key integrity: the conformed identifier on each entity must be unique and non-null, and the relationships between entities should hold: if a conformed order references a conformed customer, that link should be verifiable. These checks are simple, tool-agnostic, and catch the failures that corrupt everything downstream. Beyond key integrity, enforce one more thing: definitions must be versioned and communicated intentionally. If your semantic models live in code, that means Git, which gives you history, diffs, and a review process for every change to a shared definition. Meaning changes over time, and a silent refactor of a shared semantic model is a silent breakage for every consumer depending on it.

Freshness is part of the contract, not an operational afterthought. Finance truth often needs slower, stricter cycles. Product and operations signals often need faster cycles with tolerance for late adjustment. One global latency target is just rigidity in another form.

2. Why Governance Fails Without Traceability

Technical design without ownership decays. But ownership without traceability decays just as fast.

The documentation trap

Governance only creates value when connected to runtime delivery. A glossary entry by itself does not prevent metric drift. It helps only when the definition is linked to the models that implement it, the owners who approve changes, and the lineage that shows where it comes from.

Most governance programs stall at the documentation step. Teams write definitions in a wiki, assign nominal owners, and call it done. Then a source field changes, a model gets refactored, and the glossary entry silently falls out of sync. Nobody notices until a metric is wrong in a board presentation.

Connecting definitions to delivery

For each critical business definition you should be able to answer five questions in minutes, not days: what it means, who owns it, where it is implemented, which marts consume it, and which source fields it depends on. If one of those links is missing, trust degrades and teams fall back to local logic.

This is where platform work and governance stop being separate tracks. The glossary should connect to catalog metadata, model contracts, test status, and lineage graphs that run from source systems through Silver contracts to Gold marts. When an upstream field changes, the impact should be visible at the metric and dashboard level before any consumer notices it.

The payoff is immediate and operational: faster incident triage, fewer conflicting KPI definitions, safer schema changes, and less time in reconciliation meetings. Governance maturity is not more policy text. It is definition traceability wired into day-to-day analytics delivery.

Ownership roles

Platform engineering owns Bronze ingestion and Silver-cleaned reliability. Analytics engineering owns Silver-integrated semantics and metric contracts. Data domain owners own business meaning for critical entities and KPIs. Data stewards own governance quality: searchable definitions, complete lineage, current ownership metadata. BI teams consume Gold marts and apply report-level semantics on top; exceptions flow back through governed change, not permanent local forks.

The handoff between technical and domain ownership matters more than most teams acknowledge. Bronze and Silver-cleaned are primarily engineering concerns: source fidelity, quality controls, operational robustness. As you move into Silver-integrated semantics and Gold, the work becomes increasingly about domain interpretation, and that requires analytics engineering, BI, and consultants with real business context to be in the room. Domain owners approve business definitions. Analytics engineering implements them as tested models. When those definitions are implemented in code, discoverable in a catalog, and traceable back to source systems, you have reached a useful level of governance maturity. Not before.

3. Implementation Blueprint (Without Overengineering)

You do not need a 12-month transformation program. The Silver progression is additive by design, which means you can introduce a Conformed Entities sublayer on top of your existing source-aligned Silver without touching anything downstream. Your current Gold marts keep running. You introduce the new contract layer, migrate one mart to consume it, and validate. Nothing breaks until you deliberately move something. That is what makes this practical in a live platform rather than a greenfield exercise.

Start with one vertical slice through a domain where the pain is already visible:

- Pick one domain with recurring metric disputes. Active customers or revenue recognition are common starting points.

- Keep your current Silver as-is.

- Build one Conformed Entities model that codifies the agreed logic for that domain.

- Build or refactor one Gold mart to consume it.

- Repoint one high-visibility dashboard to that mart.

- Measure what changed: time to ship a metric change, number of conflicting definitions still in circulation, how often analysts can answer a question without asking the platform team.

- Expand domain by domain.

A Conformed Entity, as I defined it in Part 4A, is a table where all relevant information of an entity comes together under a conformed identifier. Conformed because the identifier is stable and agreed upon across sources. Integrated because the relevant attributes are assembled in one place rather than scattered across joins. That combination is what allows Gold marts and downstream consumers to stop rebuilding identity and metric logic from scratch every time.

Each vertical slice produces evidence rather than architecture proposals. To keep the model honest, enforce lineage direction in CI: Gold marts must depend on approved upstream semantic contracts, not on other Gold marts.

4. The Tooling Reality

The ecosystem does not make this easy, and it is worth being honest about why.

dbt Core manages transformation logic and produces lineage, but that lineage is a dependency graph for developers. It tells you which models depend on which, not what a metric means in business terms or who is accountable for it. dbt Cloud adds ownership metadata, documentation surfaces, and data freshness through dbt Explorer, which closes part of that gap, but it remains a tool oriented toward data practitioners rather than business users. Databricks and BigQuery have moved beyond passively reflecting what your modeling tool exposes: Unity Catalog captures lineage natively including at the column level, and BigQuery’s Dataplex now offers AI-assisted metadata enrichment and automated relationship discovery independently of dbt. Even so, encoding ownership and definitions explicitly in your models remains good practice, because native platform harvesting can tell you what exists but not what it means to the business.

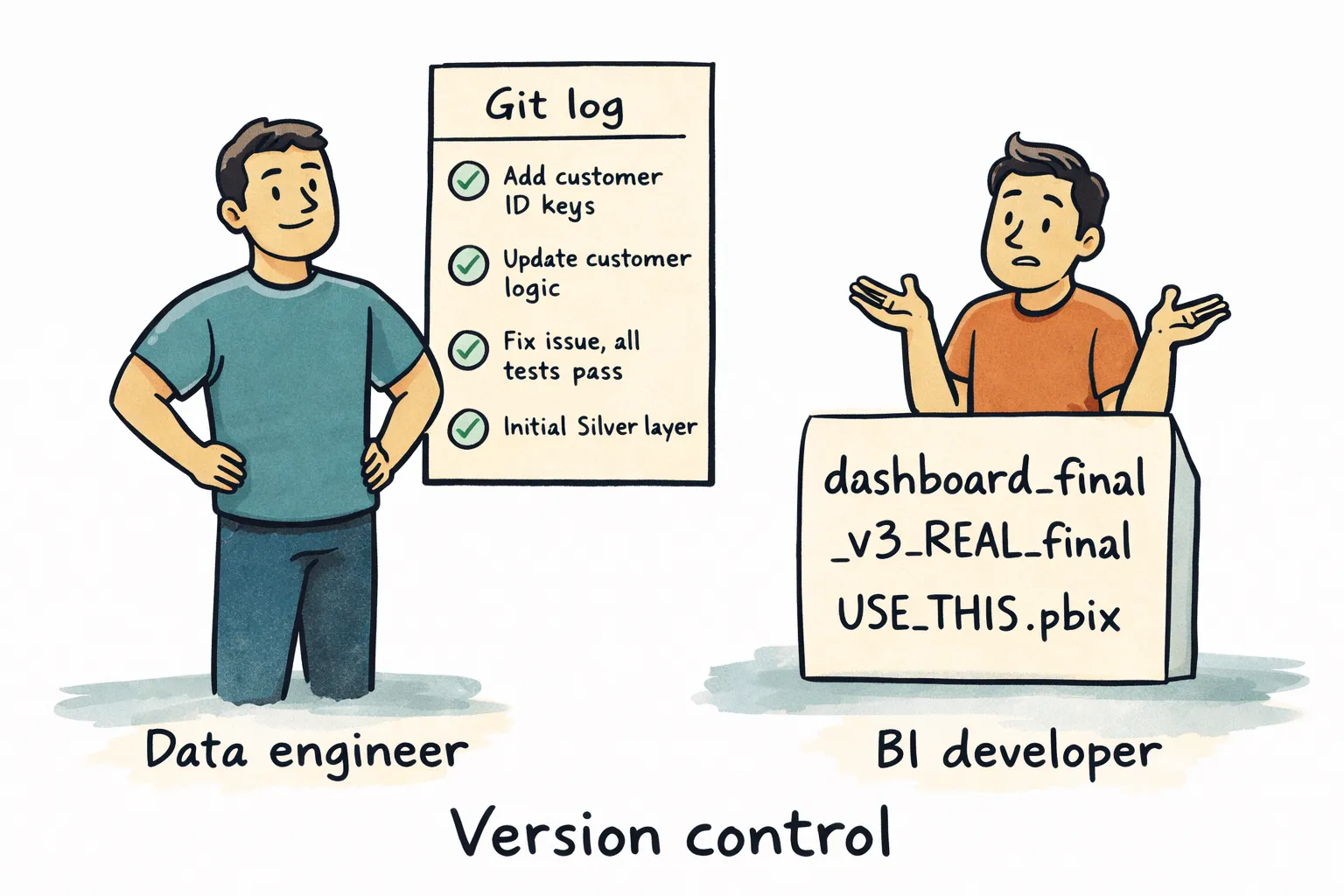

Semantic layers like MetricFlow, dbt Semantic Layer, and LookML try to centralize metric definitions and make them queryable across consumers. That intent is right. But BI tools like Power BI frequently pull data into their own in-memory model and define measures locally. Now the definition of “active customer” can exist in three places at once: a dbt model, a semantic layer definition, and a DAX measure inside a Power BI dataset. They may have been equivalent when the report was first built. Whether they still are is usually unknown, and the definition is bound to diverge at some point, because the lineage stops at the warehouse boundary. Changes to your Gold models do not automatically propagate into the BI tool’s semantic layer, and without third-party tooling nothing alerts you when they diverge. Power BI compounds this further: the standard .pbix format is a binary file, which means it does not diff meaningfully in Git. You cannot see what changed in a measure or a relationship without opening the file. The semantic decisions your BI team makes are largely invisible to version control.

Data catalog and management platforms like Collibra exist specifically to bridge this gap by connecting technical lineage from the modeling layer to business-facing definitions, ownership metadata, and governance workflows in one place. In principle that is exactly what the fragmented ecosystem needs. In practice, implementation takes substantial time, requires integration work across every tool in the stack, and depends on business users actually adopting it as a working tool rather than a compliance artifact. That last part is where most implementations stall. A catalog that developers maintain and business users ignore does not solve the semantic ownership problem. It just gives it a nicer interface.

None of this means you should avoid these tools. It means the fragmentation is real, and the gaps between them do not close themselves. Knowing what each tool actually covers, and being explicit about what it does not, is the organizational work that sits underneath all of it. And even when the technical integration is in place, business users still need to find, trust, and actually use what you have built. That last part is where most of this investment either pays off or quietly gets abandoned.

5. The Signal That Tells You It Is Working

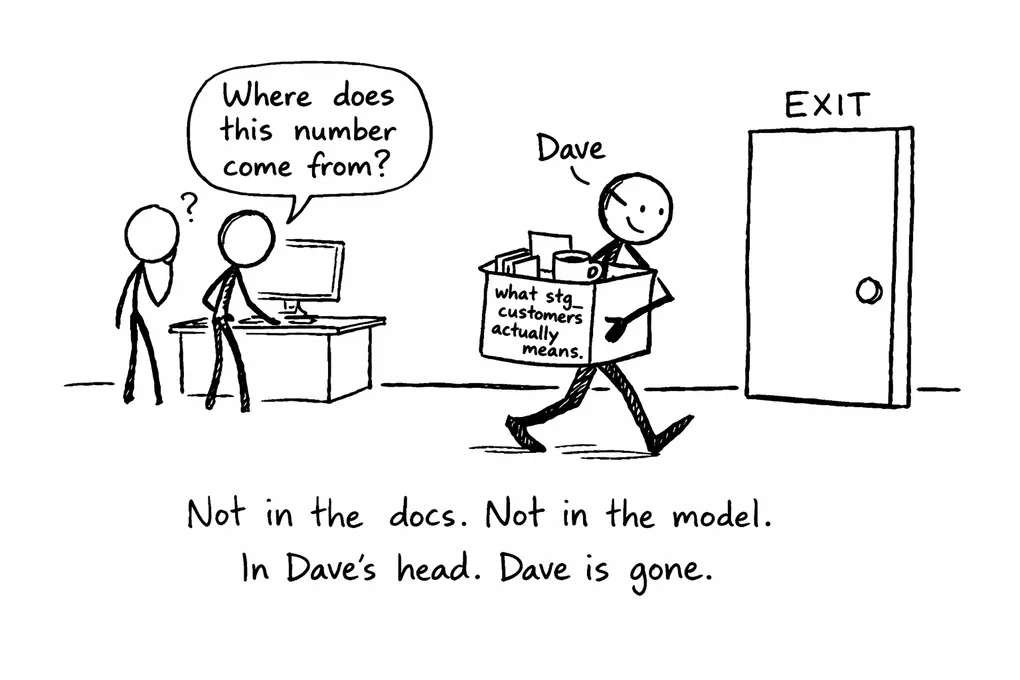

There is a chain that plays out in most organizations more often than anyone wants to admit. A business user has a question about a number. They go to a BI developer. The BI developer knows the report but not where the underlying data comes from, so they go to a data engineer. The data engineer knows the pipeline but not the business context, so they track down a data steward or a consultant who was involved in the original implementation. That person usually holds the answer in memory, not in documentation, not in a model, not in anything traceable.

This chain exists in almost every organization that has not explicitly designed its semantic boundary. The people who understand what source-aligned tables actually represent are typically the ones who were there when the source system was implemented: functional consultants, data stewards, domain experts. Their knowledge is not encoded anywhere. It lives in memory and walks out the door when they move on.

The length of that chain is the most honest signal you have for whether your platform is working. When the semantic layer is doing its job, the answer exists somewhere discoverable: a governed definition, a tested model, a catalog entry with an owner attached to it. When it is not, the answer exists in a person. And chasing that person takes time, interrupts engineers, and produces answers that nobody else can find next time the same question comes up.

Speed matters, but that is almost a side effect. The deeper question is whether knowledge is encoded in the platform or stored in human memory. A platform where business users can trace a number back to its source without routing through three people has solved something real.

6. Where to Start

Most platforms have this problem in some form. Unowned semantic space between Silver and Gold, metrics defined in three places, lineage that stops at the warehouse boundary, business knowledge that lives in a few people’s heads. That is not a failure of effort. It is what happens when layers accumulate faster than the contracts between them get defined.

The way out is not a platform rewrite. It is picking one domain where the pain is already visible and making the semantic contract explicit for that one thing. The right starting point is usually where definitions already conflict, where ownership is unclear, and where the same question gets asked repeatedly. One domain with recurring disputes over a metric definition. One entity where the join logic lives in five different Gold models. One business question that currently requires tracking down two people to answer.

Take one of those and model it through the boundary properly: define the entity, codify the business logic, test it, assign an owner, make the lineage traceable, and repoint the consumers. Measure what changed. That single slice, done well, produces more clarity about what your platform actually needs than months of architecture planning, and it gives you something concrete to bring back to the people who need convincing.

The compounding effect is real. Once one domain has a governed semantic contract, the next one is cheaper because the patterns are established, the ownership model is understood, and the team has seen what good looks like. Knowledge starts accumulating in the platform instead of in the heads of the people who happened to be there at the start. That is what a trustworthy data platform actually feels like from the inside.

While we’re on the subject and I still have your attention, I cannot resist a little dig at Power BI’s pbix format…

Join the Discussion

Thought this was interesting? I'd love to hear your perspective on LinkedIn.