The Modern Data Engineer Part 6: Hard Deletes and the Ghost Row Problem

Incremental pipelines built on updated_at watermarks cannot detect hard deletes. The result is a ghost row in your SCD Type 2 model and quietly wrong analytics downstream.

The Modern Data Engineer Part 6: Hard Deletes and the Ghost Row Problem

Part 4A and Part 4B covered how to design and operate the Silver semantic boundary, including SCD Type 2 history models for conformed entities. This part is about the failure mode that silently breaks those models after you have built them correctly.

1. The question most pipelines never ask

When engineers design an incremental loading pipeline, they think about inserts and updates. A new record appears in the source, the watermark catches it, the pipeline loads it. An existing record changes, the updated_at timestamp advances, the watermark catches that too. The model stays current.

The question that rarely gets asked is: what happens when a record disappears?

Hard deletes happen all the time. A customer requests account deletion. An order gets fully voided and purged. A product is retired and removed from the source system rather than flagged inactive. The record ceases to exist in the source. The updated_at field does not advance, because there is no row left to advance it. The watermark has nothing to catch.

The pipeline runs on schedule, finds nothing new above the watermark, and moves on. Your SCD Type 2 history table still has an open record for that entity, valid_to = NULL in one common convention, a far-future sentinel date in another. Either way, it still looks like an active record.

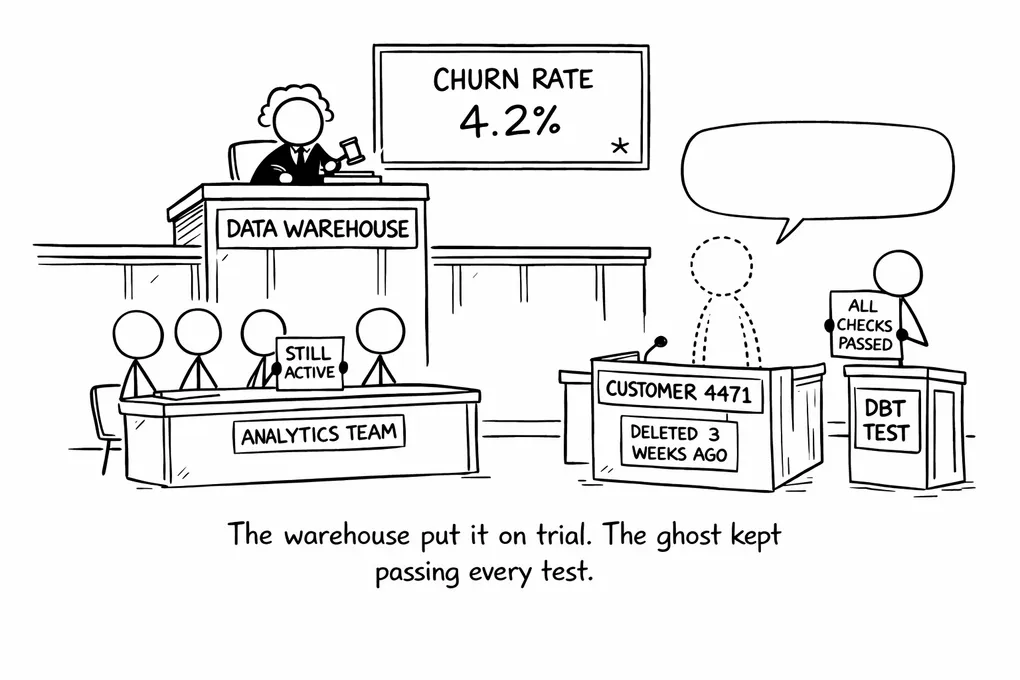

That is the ghost row: a history record representing an entity that no longer exists in the source, kept perpetually alive because the deletion left no signal the pipeline could see.

2. What the ghost row actually does to downstream models

The damage is quiet, which is what makes it persistent. Nobody gets an error. No test fails. The pipeline succeeds, the model refreshes, and the numbers shift imperceptibly.

Consider a customer churn model. It counts customers active at the start of a period and measures how many are still active at the end. If a customer was hard-deleted from the CRM three weeks ago, the churn model still sees them as active today. They did not churn from the platform’s perspective, because the platform was never told they left. Retention rates go up, not because retention improved, but because the denominator is wrong. Revenue attribution models can carry the same problem. A subscription that was cancelled and purged continues to appear as live ARR.

These errors are hard to investigate precisely because they are not errors in any visible sense. The SQL is correct. The pipeline logic is correct. The SCD Type 2 record was created properly and follows every convention. What is missing is the information that the source event that would have closed it never arrived.

3. Why this is structural, not a bug

The limitation is inherent to how watermark-based incremental loading works. A watermark pattern is fundamentally a change detection mechanism: it finds rows whose state advanced past a recorded high-water mark. Deletion leaves no state to advance. It removes the row from the result set entirely.

This is worth stating plainly because it means you cannot fix this by improving the pipeline. You can tune the watermark logic, tighten the schedule, add retry handling, and the ghost row problem will still be there, because a deleted row produces no state change to observe.

Handling hard deletes requires a different detection mechanism layered on top of the incremental load, or replacing it entirely for sources where deletion is common.

4. Detection: three approaches and their tradeoffs

When I use this as an interview question with senior candidates, most get a blank stare. Not because the question is unfair, but because they have not encountered the problem as a distinct design concern before. They have built pipelines and operated them, often for years, without ever having to reason about what those pipelines structurally cannot see. The blank stare itself is informative: it suggests the pipelines they have built probably have this problem right now and nobody has noticed yet.

Good candidates start working toward the answer. They mention full key scans. Some get to soft-delete conventions. A few arrive at CDC. What distinguishes the strong answers is recognising that detection and propagation are two separate problems, and that you have to solve both.

Full snapshot diff (PK comparison) is the most straightforward approach. Pull all active primary keys from the source, compare them against the set of open records in the warehouse, and anything that appears in the warehouse but not in the source has been deleted. This is reliable and works for any source that can enumerate its current records, including most REST APIs. The cost is that it requires a full extract every run. For sources with millions of records that scales poorly, and for sources that cannot efficiently enumerate all active keys it may not be feasible at all. For smaller sources or sources where the latency of deletion detection matters more than extraction cost, it is often the right call.

Soft delete conventions sidestep the problem by making deletions visible as updates. A deleted_at timestamp or an is_deleted flag gets set on the row rather than removing it, and a watermark-based pipeline catches it the same way it catches any other update. This is reliable when the source actually supports it, but that requires per-entity verification. SaaS vendors document their soft delete behaviour inconsistently, and plenty of entities that look like they should follow the convention turn out not to. Even where it is supported, soft deletes are still polling: you are checking whether the deletion flag advanced past the watermark, not receiving a deletion event directly.

WAL-based CDC (Debezium and similar tools) is the only approach here that gives you actual deletion events rather than the absence of expected updates. Change Data Capture reads from the database write-ahead log, the ordered record of every write before it hits storage, which records row deletions as first-class events. Because it reads the log rather than polling the table, each event arrives as it happens rather than waiting for the next pipeline run. Each event carries a commit timestamp from the WAL itself, the time the transaction was written to the log, which is what gives you the difference between a record deleted at 14:03 and a record deleted sometime in the last six hours. This is distinct from the processing timestamp that reflects when the CDC tool consumed the event, which is later and less useful for history accuracy. The constraint is access: WAL replication requires database-level connectivity and appropriate replication permissions, which are often unavailable for managed SaaS sources where you are connecting via API rather than directly to the underlying database.

Each approach involves a different trade-off between reliability, cost, and access requirements. The choice usually depends on what the source actually allows, not on what would be ideal in the abstract.

5. Propagation: closing the record and the deletion marker

Once you have the delete signal, the next question is what to do with it in the SCD Type 2 layer.

The minimum is to close the open record: set valid_to to the detected deletion timestamp and mark the record inactive. Once that write lands, downstream models that query for open records will stop returning the entity, which means a churn model built after that point will correctly see the customer as gone rather than perpetually active.

The question of what to put in valid_to matters more than it first appears, and it connects to a distinction worth understanding. What you have from a snapshot and polling-based detection is detected_at: the timestamp when your pipeline ran and observed that the record was gone. What you want for an accurate history is deleted_at: the timestamp when the deletion actually happened in the source. For snapshot diff these are never the same, because the deletion could have happened hours or days before the pipeline run that caught it, and there is no way to recover the precise time. Soft deletes are better on this point: if the source writes a deleted_at timestamp to the row at the moment of deletion, the pipeline can read it directly, and the precision loss is minimal. CDC gives you the WAL commit time, which is the closest approximation available and does not depend on the source application populating a field correctly.

This distinction matters for how honest you are about the accuracy of your historical model. A history table built from daily snapshots might have valid_to values that are accurate to within 24 hours at best. For many analytics use cases that precision is sufficient, but for anything that needs precise event-time attribution it is a real limitation worth surfacing in the model documentation rather than leaving as an implicit assumption consumers have to discover for themselves.

Beyond closing the record, there is a second step that matters for rebuild accuracy: the tombstone row. Closing the record removes the ghost, but it does not encode the deletion as a fact in the history table. If you ever need to rebuild the SCD Type 2 model from source history, a closed record with no tombstone loses the information that deletion happened at all. A reader looking at the history sees a record that was open until valid_to, with no subsequent state. Was it deleted? Migrated to a different identifier? Merged into another entity? The closed record alone does not say.

A deletion marker row solves this by adding an explicit record representing the deleted state: same entity key, valid_from set to the detected deletion timestamp, a status or type indicator marking it as a deletion event. The history now has a complete, rebuildable representation of what happened to the entity. The deletion is a recoverable fact, not just an absence.

The deletion marker row solves the operational problem correctly: once it is written, downstream models see the entity as gone. What it does not solve on its own is rebuild fidelity. If the Silver layer is ever dropped and rebuilt from the raw layer, the raw layer has no record that the deletion happened. It contains what arrived from the source, and a hard delete produces nothing in that layer: no row, no signal, no timestamp. A rebuild from raw reproduces the ghost row because there is nothing in the raw layer to trigger the closing logic or write the deletion marker. To make a full Silver rebuild possible, you need a separate technical table in the raw layer that records every detected deletion as a durable fact: the primary key of the deleted entity and the timestamp at which the deletion was detected. This table is not a business-facing artifact, has no analytical consumers, and does not replace the deletion marker row in Silver. It exists solely as the raw-layer anchor that a complete rebuild requires. Without it, the Silver layer is accurate as long as you never rebuild it from scratch, which is a fragile guarantee to depend on.

(In Kafka and Debezium literature, the term “tombstone” refers to a different mechanism: a null-value record used for log compaction. The concept here is the SCD row pattern, not the Kafka construct.)

6. What a complete answer looks like

The reason “what happens when a source record is hard-deleted?” works as an interview question is that it does not have a single answer. It has several, layered on top of each other: whether the pipeline can detect the deletion at all, how that signal propagates to close the SCD record, whether the timestamp you write to valid_to reflects when deletion happened or merely when you noticed it, and whether the history table will survive a rebuild with the deletion encoded as a recoverable fact. A candidate who has thought through all of those layers has had to reason about detection, propagation, timestamp precision, and history model integrity as distinct concerns, which is precisely the kind of multi-layer pipeline reasoning that separates someone who has built these systems from someone who has operated them without stress-testing the assumptions.

Recognising that watermark-based incremental loads are inherently growth-only is the prerequisite. From there, a solid answer distinguishes detection from propagation and can articulate at least two detection strategies with their tradeoffs. A strong answer also knows why detected_at and deleted_at are not the same and what that means for the valid_to value you write to the history table.

The deletion marker question is where it gets interesting. Most candidates who get this far have thought about closing the record. Fewer have thought about what happens when you need to rebuild from source. If your closed record contains no signal that deletion happened, the rebuild produces a model that looks identical to one where the record simply stopped being updated. That is a problem that tends to stay invisible until you actually need to do a rebuild, at which point you are trying to reconstruct the deletion history from source systems that may no longer carry it.

7. Connecting back to the Silver layer

Parts 4A and 4B argued that Conformed Entities in the Silver semantic layer are where shared business logic should be encoded once, tested, and consumed by everything downstream. SCD Type 2 history belongs in that layer, because it is the representation of how entities change over time, and that representation is shared across every Gold mart and downstream model that touches the entity.

The ghost row problem is what happens when that history model is corrupted silently, without any visible error. A customer who no longer exists in the source continues to appear in every mart that consumes the history, and the metrics built on top of those marts are wrong in ways that are difficult to detect precisely because the data looks clean and standard data tests have nothing to flag. A row that is structurally valid, correctly typed, and referentially intact will pass every quality check even if the entity it represents ceased to exist months ago.

Getting hard delete handling right is part of what it means for the Silver layer to accurately reflect the state of the source, including its absences. When that contract is not met, the governance work described in Part 5 operates on a foundation that is already compromised. You can trace a metric back to a Conformed Entity, identify the owner, verify the model tests pass, and still be looking at numbers that are wrong because the entity table has ghost rows the tests have no way to detect.

The fix is architectural and it belongs upstream, in the ingestion design, before the SCD Type 2 model is built. Once ghost rows have accumulated for months, the cleanup is painful: you need to establish ground truth from the source, identify which open records should have been closed, determine approximate deletion timestamps, and decide whether to introduce deletion marker rows retroactively. That remediation is survivable the first time, but the ingestion design that caused it will produce the same problem again unless it is actually changed.

Scope note: This post covers hard deletes as a pipeline and history modelling problem. It does not cover the intersection with PII obligations. A “forget me” request under GDPR is a different kind of deletion event: the record may need to be purged or anonymised across the entire warehouse, including history tables that were built to be immutable. That involves deletion registries, downstream erasure jobs, and decisions about what anonymisation means for a conformed entity that other models depend on. It deserves its own treatment, and I may return to it in a future post.

Part 7 looks at how ELT and ETL products handle hard deletes in practice, covering Fivetran, Airbyte, dlt, and a few others, with attention to what the documentation tends to understate and what an engineer should actually verify before trusting that the connector has this covered.

Join the Discussion

Thought this was interesting? I'd love to hear your perspective on LinkedIn.